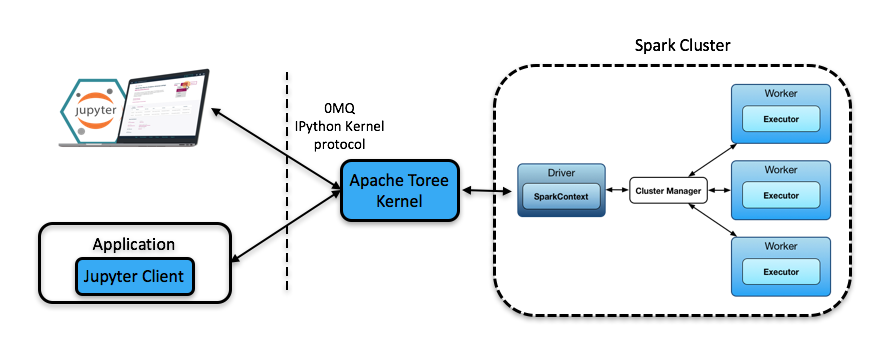

Toree is a Scala kernel for the Jupyter Notebook platform providing interactive access to Apache Spark.

Get Toree 0.5.0-incubatingApache Toree is a kernel for the Jupyter Notebook platform providing interactive access to Apache Spark. It has been developed using the IPython messaging protocol and 0MQ, and despite the protocol’s name, Apache Toree currently exposes the Spark programming model in Scala, Python and R languages.

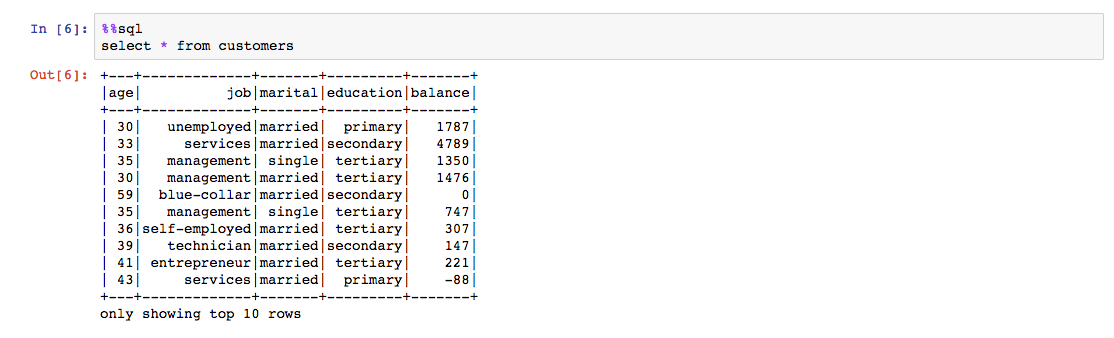

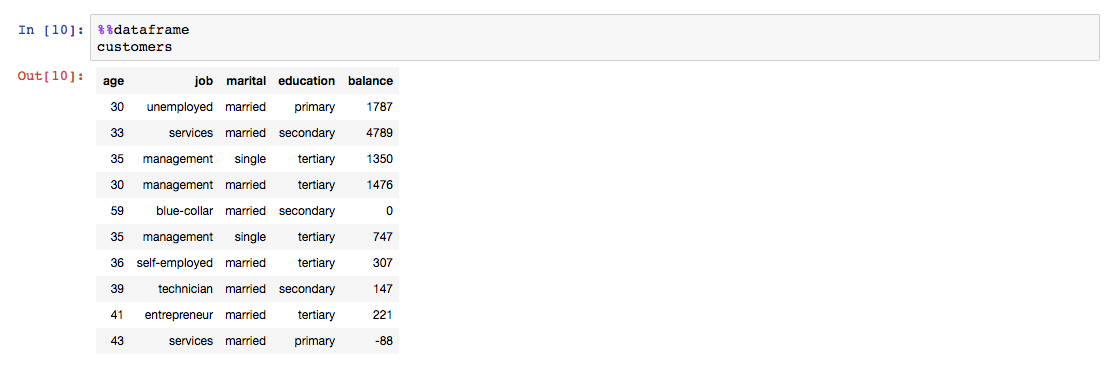

Toree supports a number of interaction scenarios. In one common case, applications send snippets of code which are then executed by Spark, and the results are returned directly to the application. This style of interaction is what users of Notebooks experience when they evaluate code in cells. Instead of sending raw code, an application can send magics, which might be commands to add a JAR to the Spark execution context or a call to execute a shell command such as “ls”. Toree provides a well-defined mechanism to associate functionality with magics, and this is a useful point of extensibility of the system.

Applications wanting to work with Spark can be located remotely from a Spark cluster and use a Apache Toree Client or Jupyter Client to communicate with a Apache Toree Server running on the cluster, or they can communicate directly with the Apache Toree Server. Multiple clients/applications can communicate with a single Kernel which contains a Spark application context, and this provides a simple form of multi-tenancy.

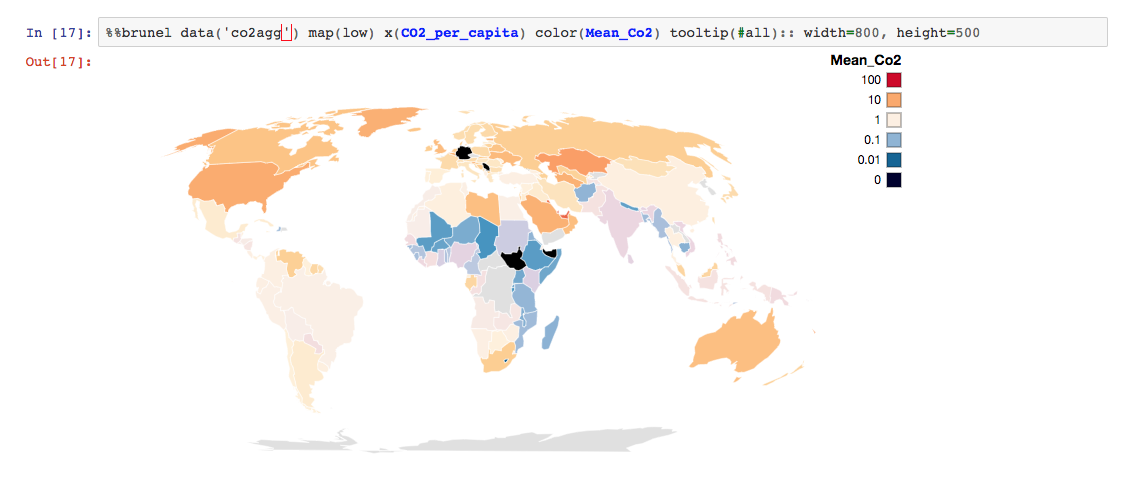

Apache Toree, via extensions like Brunel for Apache Toree, supports rich visualizations that integrates directly with Spark Data Frame APIs

Apache Toree provides a set of magics that enhances the user experience manipulating data coming from Spark tables or data